8 Content Engagement Metrics That Predict Subscriber Lifetime Value

Media publishers face a persistent challenge: determining which subscribers will stay, spend, and engage for years versus those who'll churn after their first billing cycle. Traditional metrics like page views or session counts tell you what happened, but they don't reliably predict what comes next. By tracking specific engagement patterns, data analysts can build predictive models that identify high-value subscribers early, allowing editorial and retention teams to allocate resources where they'll generate the most revenue.

Content Depth Score: Beyond Surface-Level Scrolling

Content depth score measures how thoroughly users consume individual articles, combining scroll depth, time spent, and interaction signals like highlighting or sharing. Unlike simple scroll tracking, this composite metric identifies readers who genuinely engage with material rather than bouncing after scanning headlines. A reader who consistently reaches 80% depth on long-form journalism demonstrates qualitatively different intent than someone who skims three paragraphs before leaving.

Research from the Reuters Institute found that [news subscribers who read at least three articles per week are 2.5 times more likely to renew](https://reutersinstitute.politics.ox.ac.uk/digital-news-report/2023) compared to occasional readers, but the depth of that reading matters as much as frequency. Publishers who segment users by content depth often discover that 20% of subscribers account for 60% of total content consumption, and these power users typically exhibit three-year retention rates versus nine-month averages for casual subscribers. This concentration effect means that identifying depth patterns early allows you to nurture high-potential subscribers before competitors do.

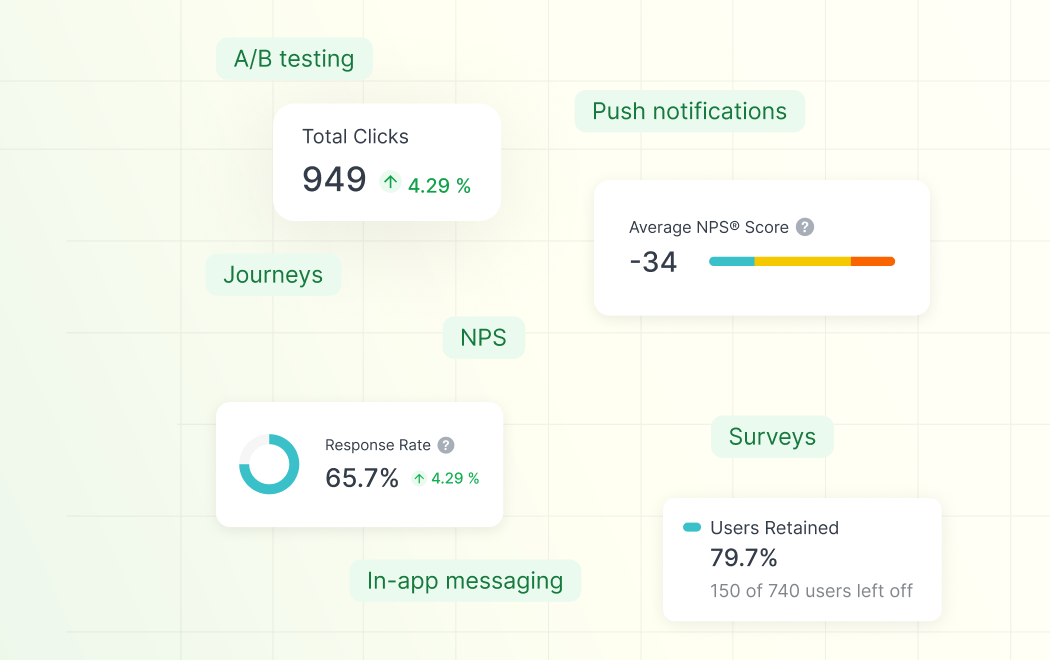

For media organizations, content depth becomes actionable when you can correlate it with specific content types or topics. If a subscriber reads all climate coverage in depth but skims political news, your recommendation engine should prioritize environmental journalism in their feed. Analytics platforms like Countly, Mixpanel, or Amplitude can track these patterns across user cohorts, letting you build segments based on topical depth rather than generic engagement scores.

Cross-Format Consumption Patterns

Subscribers who consume content across multiple formats—articles, podcasts, newsletters, video—consistently show higher lifetime value than single-format users. This cross-pollination indicates deeper integration of your media brand into their daily routines and information consumption habits. Someone who reads your morning newsletter, listens to your afternoon podcast, and watches your documentary series has built multiple touchpoints that create switching costs when considering cancellation.

The behavioral economics here are straightforward: each additional format creates a separate habit loop. A subscriber might forget to visit your website for a week, but if they're also podcast listeners, that audio habit maintains the relationship during lapses in reading behavior. Cross-format users also demonstrate lower price sensitivity because they perceive greater value from their subscription investment, making them more resilient during economic downturns or when competitors launch promotional pricing.

Tracking cross-format engagement requires connecting user identifiers across platforms, which presents technical challenges for media organizations with legacy systems. You need unified event tracking that captures whether user ID 847392 read three articles on mobile, downloaded two podcast episodes, and opened four newsletters this week. Modern product analytics stacks accomplish this through SDK implementations that share user identifiers across web, mobile apps, and email platforms, creating a single customer view.

Return Visit Frequency in the First 30 Days

The first month after subscription conversion establishes behavioral patterns that persist throughout the customer lifecycle. Subscribers who visit your platform 15+ times in their first 30 days show substantially different retention curves than those with 3-5 visits during the same period. This metric works as an early warning system, identifying at-risk subscribers before their first renewal decision approaches.

Early-stage frequency matters because it reflects whether your content has become part of someone's routine or remains a novelty purchase they're exploring. Media brands often see a predictable drop-off pattern: initial enthusiasm in week one, declining visits in week two, and either habit formation or abandonment by week three. By measuring return visit frequency as a leading indicator, you can implement intervention campaigns for subscribers who fall below frequency thresholds, perhaps offering personalized content recommendations or highlighting unused features like newsletters or saved articles.

The challenge with this metric lies in establishing appropriate baselines across different subscriber segments. A daily news junkie should visit 20+ times monthly, while someone who subscribes primarily for weekend long-reads might only visit 6-8 times but still represent a stable, high-value subscriber. Context matters, which is why sophisticated retention models segment users by stated preferences during onboarding and measure frequency deviations within those segments rather than applying universal thresholds.

Content Recency and Freshness Balance

High-LTV subscribers exhibit a distinctive pattern: they balance consumption of current news with exploration of archive content. This behavior signals that they value your publication as a comprehensive resource rather than just a source for today's headlines. Someone who reads yesterday's feature, today's breaking news, and a three-month-old investigative piece demonstrates brand loyalty that transcends individual stories.

The recency-freshness balance metric quantifies this by tracking the publication date distribution of consumed content. A healthy pattern might show 60% of reading occurring on content published within 72 hours, 25% on content from the past month, and 15% on archive material. Subscribers who exclusively chase breaking news tend to have narrower engagement and higher churn risk because they're essentially using you as a commodity news source rather than a differentiated media brand.

This metric becomes particularly valuable for publications with significant archive value, such as business intelligence platforms, specialized trade publications, or investigative journalism outlets. When users regularly dig into your archives, they're discovering the compounding value of their subscription beyond daily updates. Analytics implementations should tag content with publication dates and track both view events and timestamps, allowing you to calculate recency distributions for each subscriber cohort.

Feature Adoption Velocity

Beyond consuming content, high-value subscribers adopt platform features like saved articles, personalized feeds, comment participation, or newsletter customization. Feature adoption velocity measures how quickly after subscription someone begins using these engagement tools, with faster adoption correlating to longer retention. A subscriber who bookmarks articles in week one and customizes their newsletter preferences in week two is building investment in your platform that creates friction around cancellation.

Media platforms often underestimate how feature adoption signals intent. When someone takes the time to configure preferences, create reading lists, or set up notifications, they're making explicit decisions about how your product fits into their life. These micro-commitments accumulate into psychological switching costs. Even if a competitor offers similar content, the inertia of recreating personalized setups keeps subscribers from churning. Measuring which features drive the strongest LTV correlation lets you prioritize onboarding flows and educational campaigns around those specific capabilities.

Product analytics platforms track feature adoption through event instrumentation, logging actions like "savedarticle," "customizedfeed," or "enabledpushnotifications." The key is measuring not just whether someone ever used a feature, but how quickly after subscription they adopted it and how frequently they return to it. Subscribers who save their first article within 48 hours of converting show different retention curves than those who take two weeks to discover the feature, even if both groups eventually use it regularly.

Social and Sharing Behavior

Subscribers who share your content externally—whether through social media, email forwards, or messaging apps—demonstrate advocacy that correlates with extended lifetime value. Sharing behavior indicates that someone finds your content valuable enough to recommend, which creates social accountability around their subscription decision. When a subscriber forwards your articles to colleagues or posts them to professional networks, they're publicly associating themselves with your brand.

The LTV correlation works through multiple mechanisms. First, sharers are typically more engaged with content because you don't recommend things you haven't consumed. Second, sharing creates informal commitments: if someone regularly posts your articles, canceling their subscription would disrupt their content curation routine. Third, sharers often drive referral growth, meaning they generate additional subscriber value beyond their own payments. A single subscriber who brings in three referrals over two years might deliver 4x their direct LTV through network effects.

Tracking sharing requires instrumentation that captures share button clicks, but also considers that modern sharing often happens through copy-paste URLs or native mobile share sheets. Privacy-conscious tracking approaches focus on aggregate share counts per subscriber cohort rather than tracking individual shared content across external platforms. You're measuring whether a subscriber shares frequently, not building profiles of their social connections. This distinction matters both for privacy compliance and for building ethical analytics practices.

Session Consistency Across Devices

Subscribers who access your content across multiple devices—desktop at work, mobile during commute, tablet on weekends—show higher retention than single-device users. Cross-device consistency indicates that your media brand has permeated different contexts in someone's life rather than being confined to one use case. This diversification makes your subscription more resilient to life changes: if someone switches jobs and loses their commute reading time, they might maintain weekend tablet habits.

Device diversity also serves as a proxy for subscription stickiness. Someone who's gone through the effort of installing your mobile app, bookmarking your site on their work computer, and setting up tablet access has made multiple small investments in accessibility. These friction-reducing steps make your content more convenient to access, which drives higher engagement, which reinforces the value perception that sustains subscriptions. It's a virtuous cycle initiated by cross-device adoption.

Analytics implementations must handle device tracking carefully, balancing identity resolution with privacy expectations. Authenticated user sessions make this straightforward: when someone logs in, you know it's the same subscriber regardless of device. For publishers supporting anonymous browsing, device attribution becomes fuzzier, but you can still measure whether individual subscriber accounts show access patterns across multiple device types without necessarily tracking every unauthenticated session.

Content Type Diversity and Niche Engagement

The final predictive metric combines breadth and depth: measuring both how many content categories a subscriber explores and whether they show passionate engagement with specific niches. Ideal subscribers read across your coverage areas—business, technology, culture, politics—demonstrating general interest in your editorial voice, while also showing concentrated engagement with particular topics. This combination suggests they value both your comprehensive coverage and your specialized expertise.

Content type diversity prevents subscriber burnout from topic fatigue. Someone who exclusively reads political coverage might experience exhaustion during intense news cycles and churn as a coping mechanism. Subscribers with diverse reading habits maintain engagement even when specific coverage areas become overwhelming. Meanwhile, niche passion for particular topics or columnists creates differentiated value that generic news aggregators can't replicate, reducing competitive vulnerability.

Building Predictive Models Without Over-Engineering

The practical challenge for mid-level data analysts is implementing these eight metrics without building unwieldy dashboards that nobody actually uses. Start by instrumenting three metrics that your organization can realistically act on based on current resources and technical capabilities. If your editorial team can create personalized email campaigns but lacks sophisticated recommendation engine infrastructure, prioritize metrics that inform segmentation for email—like content depth score and return visit frequency—over those requiring real-time algorithmic adjustments.

Common mistakes include tracking metrics without establishing intervention protocols. Knowing that low first-month visit frequency predicts churn is useless if your retention team has no process for reaching those at-risk subscribers. Before expanding your measurement framework, ensure you have clear workflows connecting metric thresholds to specific actions. This might mean setting up automated campaigns in your CRM when subscribers fall below engagement benchmarks, or creating weekly reports that retention managers actually review and respond to. Analytics without action is just expensive data storage.

Strategic Integration With Editorial and Business Operations

The long-term value of engagement metrics emerges when they influence content strategy and resource allocation, not just retention campaigns. Publishers who share engagement data with editorial teams enable evidence-based decisions about coverage priorities and content formats. If investigative journalism shows 3x higher content depth scores than quick news aggregation, that finding should inform hiring decisions and content budgets, not just fuel feel-good conversations about journalistic values.

Forward-thinking media organizations are building feedback loops where subscriber engagement metrics inform both retention tactics and product development. When you discover that cross-format consumption predicts high LTV, the strategic response isn't just promoting your podcast to article readers—it's investing in format diversity as a competitive moat. These metrics transform from operational dashboards into strategic inputs that shape three-year roadmaps. For data analysts, this evolution means shifting from reporting on what happened to influencing what comes next, positioning analytics as a core business function rather than a support service.

Key Takeaways

• Content depth score and cross-format consumption patterns identify subscribers who've integrated your media brand into daily routines, predicting retention better than simple page view counts

• First-month return visit frequency serves as an early warning system for churn risk, allowing intervention before subscribers reach renewal decision points

• Feature adoption velocity and sharing behavior signal psychological investment that creates switching costs, making subscribers resilient to competitive offers

• Cross-device consistency and content diversity prevent engagement burnout while demonstrating that your subscription delivers value across multiple life contexts

Sources

[Reuters Institute Digital News Report 2023](https://reutersinstitute.politics.ox.ac.uk/digital-news-report/2023)

[Countly Product Analytics Documentation](https://countly.com/product-analytics)

[Media Subscription Retention Best Practices](https://www.niemanlab.org/2023/06/subscriber-retention-strategies/)

FAQ

Q: How many of these eight metrics should we implement simultaneously to build an effective LTV prediction model?

A: Start with three metrics that align with your organization's technical capabilities and existing intervention workflows, typically content depth score, return visit frequency, and one metric tied to your differentiated value proposition like cross-format consumption for multimedia publishers. Adding metrics without the infrastructure to act on them creates analysis paralysis rather than business value. Expand your measurement framework only after establishing clear processes connecting metric thresholds to retention campaigns or editorial decisions.

Q: What analytics tools can track these engagement metrics across web and mobile platforms for media publishers?

A: Product analytics platforms like Countly, Mixpanel, Amplitude, and Heap provide unified event tracking across web and mobile with the SDK implementations needed to maintain consistent user identities. Google Analytics 4 offers basic cross-platform tracking, though it's optimized more for marketing attribution than product engagement analysis. The choice depends on whether you need real-time segmentation capabilities, retroactive event analysis, or specific privacy compliance features like data residency requirements.

Q: How do we balance tracking detailed engagement patterns with subscriber privacy expectations in media publishing?

A: Focus analytics instrumentation on aggregate cohort behaviors rather than individual content consumption profiles, tracking that "Subscriber 847392 read 12 articles this week with 75% average depth" without necessarily logging every specific article title. Provide transparent privacy policies explaining what you measure and why, offer authenticated experiences where tracking enables personalized value like reading progress sync, and implement data retention policies that delete granular event data after aggregation. Privacy-conscious analytics builds subscriber trust while still enabling predictive modeling.