How to A/B Test In-Game Monetisation Without Hurting Player Experience

Getting monetisation right in games means finding the narrow path between revenue growth and player satisfaction. A/B testing offers product managers a way to validate pricing, purchase flows, and offer structures with real player data rather than assumptions. The challenge lies in running these experiments without degrading the experience for users who fund your game's development and expect fair, enjoyable gameplay in return.

Understanding the Tension Between Testing and Trust

Player trust is remarkably fragile when money enters the equation. When users suspect they're receiving different prices or offers based on invisible segmentation, community backlash can be swift and damaging. Games like Middle-earth: Shadow of War and Star Wars Battlefront II faced severe criticism not just for aggressive monetisation, but for the perception that systems were designed to manipulate rather than serve players. A/B testing monetisation carries similar risks if approached without consideration for how experiments affect player perception of fairness.

The core tension exists because effective monetisation testing requires variation, but variation in pricing or rewards can feel arbitrary or exploitative to players who compare notes. This becomes especially sensitive in multiplayer environments where community discussion is constant. According to Newzoo's 2023 Global Games Market Report, 71% of mobile gamers who stop playing a game cite aggressive monetisation as a primary reason, highlighting how fragile the relationship between player and publisher can be when monetary systems feel predatory. What works as a clean experiment in e-commerce can create lasting damage in a game where emotional investment runs deeper.

Successful A/B testing in this context requires thinking beyond conversion rates to consider lifetime value in its truest sense, including community health, word-of-mouth, and brand reputation. The experiments that damage player trust may show short-term revenue gains while undermining the foundation needed for sustainable growth. Product managers need frameworks that account for these longer-term effects rather than optimising solely for immediate transactional metrics.

Designing Experiments That Respect Player Agency

The first principle of ethical monetisation testing is maintaining perceived fairness across test groups. Rather than testing drastically different price points for the same item, effective experiments focus on presentation, timing, bundling, and contextual framing. For example, testing whether a limited-time offer performs better when presented immediately after a level completion versus during a natural pause in gameplay preserves fairness while gathering actionable data. Both groups have access to the same economic value, but you're learning about optimal moments for engagement.

Segmentation strategy matters enormously in how tests are perceived and in their statistical validity. Randomising based on player progression stages or engagement levels, rather than crude spending history, creates more meaningful comparisons while avoiding the appearance of price discrimination. A test that shows different starter packs to new players within their first session is far less problematic than one that offers different prices to established players based on their previous purchase behaviour. The former respects that you're learning about onboarding, while the latter signals that loyalty might be punished with higher prices.

Transparency, even partial transparency, can defuse potential backlash. While you don't need to announce every test publicly, having clear policies about how offers are determined and being prepared to explain your testing philosophy helps when questions arise. Some studios include language in their terms of service about experimental features and pricing tests, setting expectations upfront. This doesn't mean revealing your entire testing roadmap, but it does mean thinking through how you'd explain your approach if a vocal player asks why their friend received a different offer.

Choosing Metrics That Reflect Long-Term Health

Conversion rate and average revenue per user are necessary metrics but dangerously incomplete when evaluating monetisation tests. A variant that increases immediate purchases by 15% while reducing day-30 retention by 8% has likely failed, even if the revenue impact looks positive in a two-week testing window. Product managers need measurement frameworks that capture both transactional outcomes and engagement quality across extended timeframes.

Retention cohorts split by test variant reveal whether monetisation changes affect player commitment to your game. If users exposed to more aggressive pricing show statistically significant drops in session frequency or play duration after making purchases, the test variant may be extracting value while degrading the core engagement loop. These patterns often emerge gradually, requiring test extensions beyond the typical one or two-week windows common in web or mobile app testing. Game economies are complex systems where effects cascade over weeks or months.

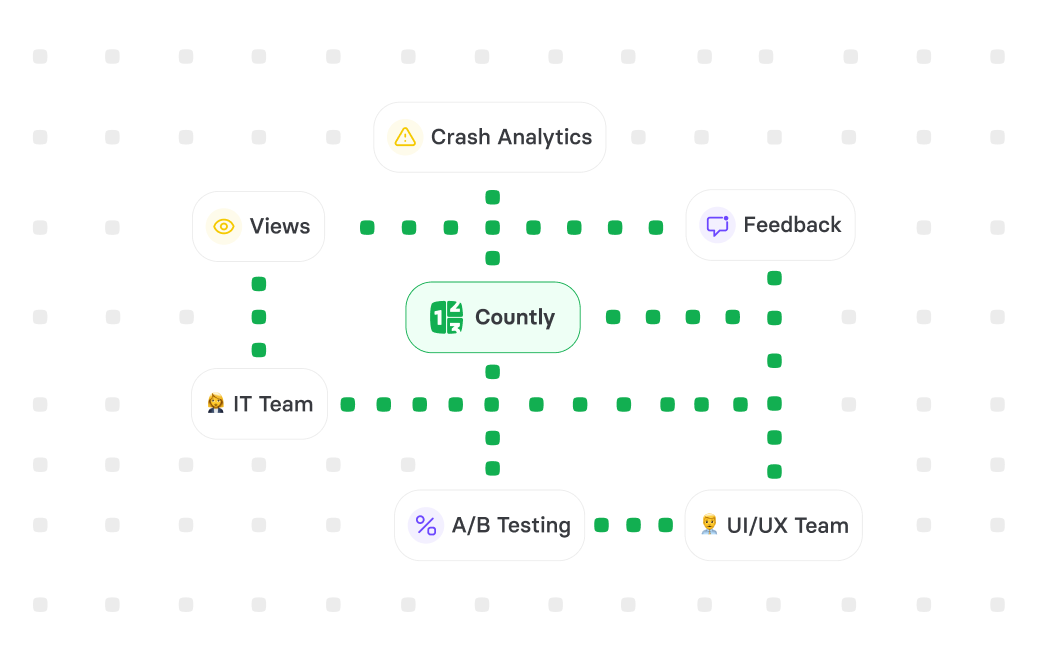

Sentiment signals provide qualitative context that pure behavioural metrics miss. Tracking support ticket volume and content, app store review sentiment, community forum discussions, and social media mentions related to monetisation gives early warning when a test crosses the line from optimisation to exploitation. Analytics platforms like Countly, Amplitude, or Mixpanel can integrate custom events that flag when users interact with pricing help documentation or attempt refunds, creating quantifiable proxies for friction and dissatisfaction. These signals should be weighted heavily in test evaluation, as they predict longer-term consequences that won't appear in immediate revenue data.

Practical Implementation Approaches and Common Pitfalls

Start with low-risk, high-learning tests before progressing to more sensitive experiments. Testing UI presentation, colour schemes, messaging, and offer timing allows you to understand how your specific audience responds to change before touching actual price points or reward structures. Many product managers make the mistake of beginning with aggressive price testing because revenue impact is immediate and measurable, but this approach skips the foundational learning about player psychology and preferences that makes later tests more effective and safer.

Sample size calculations for game monetisation require different approaches than typical web experiments. Gaming populations often include heavy spenders who represent a small percentage of users but disproportionate revenue, creating statistical challenges. A test that shows significance at p<0.05 for conversion rate might not have adequate power to detect meaningful differences in whale behaviour if these users represent only 2-3% of your population. Stratified sampling or separate analyses for spending tiers ensure you understand effects across your revenue distribution rather than optimising for the median user at the expense of your most valuable segments.

Strategic Considerations for Sustainable Monetisation Testing

Building a testing culture that balances growth with player respect requires buy-in beyond the product team. When incentive structures push teams toward short-term revenue metrics without accountability for retention or sentiment, even well-designed experiments get misused. Senior product managers should advocate for balanced scorecards that weight player satisfaction alongside financial outcomes, ensuring that quarterly revenue pressure doesn't override long-term thinking about community health and brand value.

The most sophisticated gaming companies treat monetisation testing as part of a broader player value optimisation strategy rather than pure price discovery. This means running parallel experiments on content quality, progression pacing, social features, and gameplay depth, recognising that willingness to pay derives from the overall experience, not just the offer screen. A player who encounters more enjoyable content through a successful gameplay test becomes more receptive to monetisation, creating a virtuous cycle where testing serves both business and player interests rather than trading one against the other.

Key Takeaways

• Design monetisation tests that vary presentation and context rather than creating unfair price differences between players, protecting perceived fairness while gathering actionable data

• Extend measurement windows beyond immediate conversion to capture retention, engagement quality, and sentiment signals that predict long-term consequences of monetisation changes

• Begin testing with low-risk variables like UI and timing before progressing to price points, building understanding of your audience's psychology and preferences

• Account for the statistical challenges of gaming populations where spending is heavily concentrated in small user segments that require adequate sample sizes for meaningful analysis

Sources

[Newzoo Global Games Market Report 2023](https://newzoo.com/resources/trend-reports/newzoo-global-games-market-report-2023-free-version)

[GameAnalytics: Mobile Game Monetization Best Practices](https://gameanalytics.com/blog/mobile-game-monetization-best-practices/)

[GDC: The Ethics of F2P Design](https://gdconf.com/news/ethics-f2p-design-gdc-2023-summary)

FAQ

Q: How long should I run a monetisation A/B test in a game?

A: Most monetisation tests require minimum four-week windows to capture player behaviour through typical spending cycles and detect retention effects that emerge after purchase. Games with longer core loops or seasonal events may need eight to twelve weeks for meaningful results. The key is ensuring you measure beyond the immediate transaction to understand whether the variant affects ongoing engagement and subsequent monetisation opportunities.

Q: Should I exclude existing paying users from monetisation tests?

A: This depends on what you're testing and your tolerance for risk. For major price changes or new monetisation systems, limiting tests to new users or free players reduces the chance of alienating your revenue base. For optimisations to existing flows like purchase confirmation screens or bundle presentation, including current payers provides valuable signal. Always segment results by prior spending behaviour to understand differential effects across player types.

Q: How do I handle player complaints about seeing different offers than their friends?

A: Acknowledge the question directly and explain that you test different approaches to find what works best for players while keeping the game sustainable. Emphasise that all players have access to equivalent value and that tests help improve the experience for everyone over time. If complaints indicate a test has crossed into unfair territory, be prepared to end the experiment early and equalise offers, as protecting trust matters more than completing any single test.